Visualising a Software Security Initiative

Posted by Craig H on 10 April 2013

Last month I was pleased to attend the BSIMM Europe Open Forum. BSIMM is a model for assessing software security activities within an organisation; I have been following it since its first release in 2009, and over the last several months I’ve been able to use it in earnest at Visa Europe.

For me, the most interesting discussion at the forum was on presenting BSIMM assessment results in a visually compelling way. The BSIMM document uses spider charts, which hide potentially valuable information about activities at lower maturity levels. Sammy Migues presented a format he uses at Cigital, called “equalizer diagrams”, which reveal that information but lack the comparison with a benchmark.

I decided to ask Louise (the other half of Franklin Heath) about this, as data visualisation is one of her principal skills. We’ve come up with something I like to call a “DIP switch diagram”, which I will explain in this post. If you’re familiar with BSIMM and you want to cut to the chase, you can skip straight to the diagram.

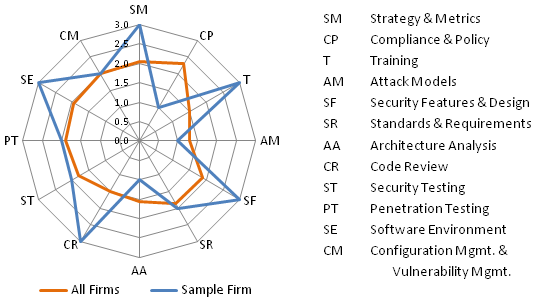

First let’s consider a spider chart, as used in BSIMM4:

The “sample firm” data is the made-up example used in BSIMM4, to spare the blushes of any of the real participants :-).

The “sample firm” data is the made-up example used in BSIMM4, to spare the blushes of any of the real participants :-).

A full BSIMM assessment contains 111 data points, showing whether a specific software security activity has been observed in the subject firm or not. That’s a lot of information to take in at a glance, so it’s useful to have a chart or diagram which summarises it. BSIMM groups the activities into 12 practices, which are further grouped into 4 domains. Each activity has a defined maturity level of 1, 2 or 3. The blue line in the spider chart above shows the highest level of maturity of any activity observed within each practice. The orange line shows the average of that measure across all 51 members of the BSIMM community.

Looking at that chart, we might immediately conclude that we’re doing a great job at Strategy & Metrics, Training, Security Features & Design, Code Review, and Software Environment as we’ve got the highest possible score in all those practices, so we don’t need to worry about those areas at all. Unfortunately that might be completely the wrong conclusion, as those 5 practices include 47 activities and, to take an extreme case, we might be doing only 5 of the 47 to get those impressive maximum scores, if those 5 activities happen to be level 3 ones in different practices.

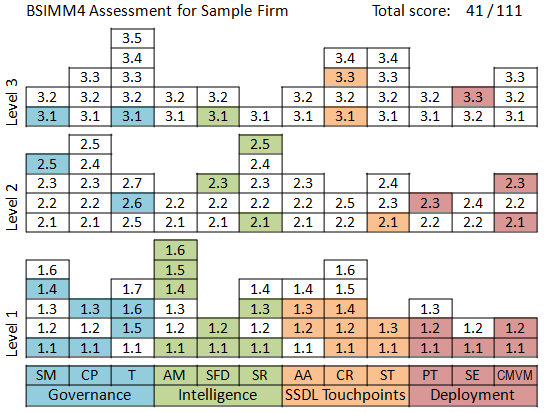

This is where the equalizer diagram comes in (so-called I suppose because it looks a bit like a graphic equalizer display). The following diagram is based on what I remember from Sammy’s presentation:

This is using the same data as the spider chart, but it tells a quite different story. Here we can see, for example, that there is plenty of room for improvement in the Training practice: although the spider chart looked like we were already doing the maximum, this shows we are only doing 4 out of 12 activities.

This is using the same data as the spider chart, but it tells a quite different story. Here we can see, for example, that there is plenty of room for improvement in the Training practice: although the spider chart looked like we were already doing the maximum, this shows we are only doing 4 out of 12 activities.

The equalizer diagram isn’t telling a full story though, because it’s not telling us how we are doing compared to our peers. This is important because, as BSIMM says, “not all organizations need to achieve the same security goals” and “there is no inherent reason to adopt all activities in every level for each practice”. The 3 levels of maturity provide coarse-grained information on how frequently activities are observed: “Level 1 activities (straightforward and simple) are commonly observed, Level 2 (more difficult and requiring more coordination) slightly less so, and Level 3 (rocket science) are much more rarely observed.” but we wondered if we could show a bit more information than that.

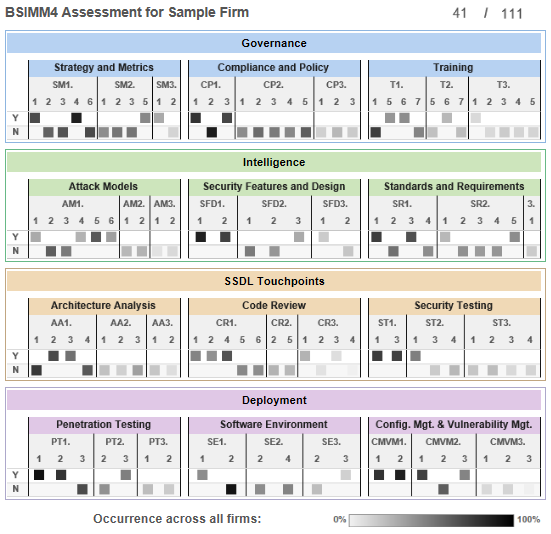

Louise created a diagram using Tableau, her current favourite visualisation tool. The basic elements in our diagram resemble DIP switches, and the shade of each switch represents the fraction of all firms in the study which perform that activity. You can click to open an interactive version of the diagram:

As the activities which are more commonly observed are darker in the diagram, it draws our attention to those first. For example, we can see that the Compliance and Policy level 2 activities are quite commonly performed, but we aren’t doing any of them; it would therefore be a good idea to look at the details of our assessment for those activities to find out if there is a good reason for not doing them.

As the activities which are more commonly observed are darker in the diagram, it draws our attention to those first. For example, we can see that the Compliance and Policy level 2 activities are quite commonly performed, but we aren’t doing any of them; it would therefore be a good idea to look at the details of our assessment for those activities to find out if there is a good reason for not doing them.

Referring to the Training practice, where the spider chart might persuade us we’re doing more than needed, and the equaliser diagram might persuade us we’re doing less than needed, this diagram shows us that we are probably doing OK: few firms in the study are doing level 2 or 3 activities in this practice. We should definitely ask ourselves why we aren’t doing [T1.1] but, apart from that, we probably have more important activities to worry about in other practices.

As well as comparing against the full set of firms in the BSIMM community, we can also use this diagram to compare against a particular industry sector. BSIMM4 includes activity counts for two sectors broken out from the total: Financial and ISV (Independent Software Vendors). Click here for a DIP switch diagram shaded for Financial firms; it’s not drastically different, but it does highlight the gap in the Compliance and Policy practice even more, as you might expect.

This concept could be refined further, but we thought it would be interesting to share what we have and get feedback as to whether this is a useful line of enquiry or not. Please let us know what you think in the comments below, and thanks for reading this far!

noplasticshowers said

Dang. Sammy’s meme is propagating.